DORA Metrics: The Four Numbers That Tell You If Your Team Is Healthy

Deployment frequency, lead time, change failure rate, time to restore. Here's what DORA metrics actually mean, what good looks like, and how to find out where your team currently sits.

Published on April 9, 2026

There’s a question I’ve seen teams avoid more than almost any other: “How do we actually know if we’re getting better at shipping?”

Gut feel only gets you so far. A team can feel productive. Standups are tight, PRs are moving, the backlog is shrinking. And still be shipping slowly and breaking production more than they should. The problem with gut feel is that it measures effort, not outcomes.

The DORA metrics, four measurements developed by the DevOps Research and Assessment team, are the closest thing the industry has to an honest answer. They’ve become the standard benchmark for engineering delivery performance, and for good reason: they’re simple, measurable, and they directly reflect what your users actually experience.

The four metrics

Before we can improve them, it helps to be clear on what each one is actually measuring:

- Deployment Frequency: How often your team successfully releases to production. This isn’t about how often you merge code. It’s about how often your users actually get new software.

- Lead Time for Changes: The time from a commit being merged to it running in production. A long lead time usually points to slow review processes, large batch sizes, or release windows that only happen once a week.

- Change Failure Rate: The percentage of deployments that cause a failure in production: bugs that require a hotfix, a rollback, or a patch release. This is the metric that usually gets used to justify slowing everything else down.

- Time to Restore Service: How long it takes to recover when something does go wrong. This one is often overlooked, but it’s arguably the most important. Incidents are inevitable, and the teams that handle them best are the ones who can move fast when it matters.

What does “good” actually look like?

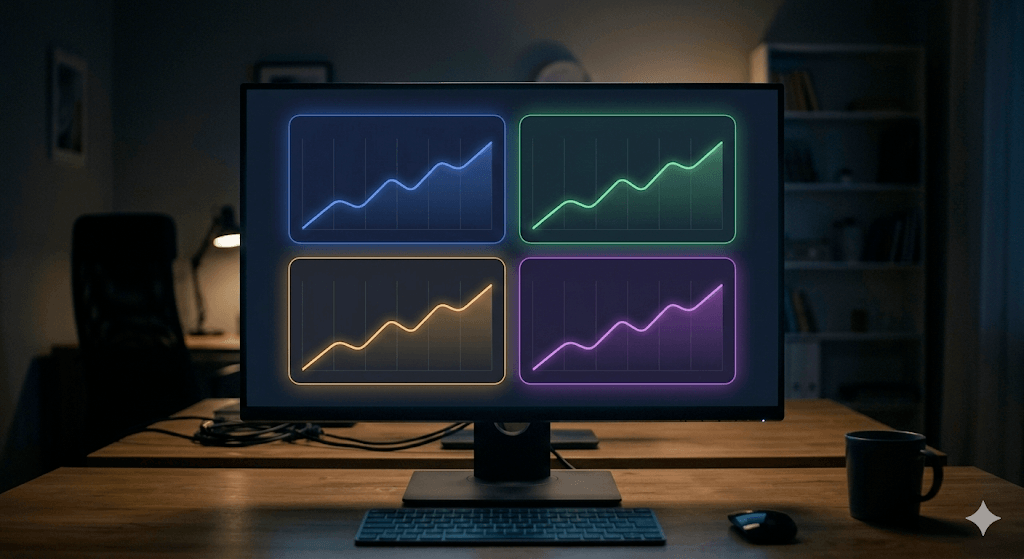

The DORA research categorises teams into four performance tiers: Elite, High, Medium, and Low. Here’s roughly where the benchmarks sit:

| Metric | Elite | High | Medium/Low |

|---|---|---|---|

| Deployment Frequency | On-demand (multiple per day) | Weekly to monthly | Monthly or less |

| Lead Time for Changes | Under an hour | 1 day – 1 week | 1 week – 1 month |

| Change Failure Rate | Under 5% | 5–10% | 10–15%+ |

| Time to Restore Service | Under 1 hour | Under 1 day | 1 day – 1 week |

Most teams I’ve spoken to sit somewhere in the Medium tier and are working towards High. The jump from High to Elite is where your release process starts to matter as much as your engineering quality, and it’s where feature flagging starts doing the heavy lifting.

To make these numbers feel real: picture a team of eight engineers shipping every two weeks. Standup is tight, the code quality is solid, engineers are engaged. But they’re sitting at Medium across the board. Lead Time is around a week, Change Failure Rate hovering near 12%, and when something breaks on a Friday it tends to ruin someone’s weekend because restoring service takes most of a day.

That’s not a talent problem. It’s a process problem. And it’s more common than most engineering managers want to admit.

If you want a real-world version of this story, I wrote about a team I led at Qoria where we were in exactly this situation. We dropped Lead Time by 83%, reduced batch size by 94%, and tripled deployment frequency — and the data tells the story better than I can summarise here. Full write-up on Substack.

How to find out where you currently sit

The good news is that most of this data already exists in your tools, you just need to pull it out.

- Deployment Frequency: Count your production deploys over the last 90 days and divide by the number of weeks. Your CI/CD pipeline logs (GitHub Actions, CircleCI, etc.) will have this.

- Lead Time for Changes: Pick 10 recent PRs and calculate the average time from merge to production deploy. This lives in your git history and deployment logs.

- Change Failure Rate: Divide the number of deployments that required a hotfix or rollback by your total deployments over the same period. Your incident tracker or post-mortem log is the source here.

- Time to Restore Service: Average the resolution time across your last 10 incidents. If you don’t have this written down anywhere, that’s worth knowing too.

DORA also runs an annual State of DevOps survey with a quick self-assessment tool if you want a shortcut. It won’t be as accurate as pulling your own data, but it’ll tell you which tier you’re in within a few minutes.

The trap most teams fall into

The instinct when you want to improve these numbers is to focus on Change Failure Rate, because failures feel like the most visible, most painful problem. So teams slow down their release process. More approvals, bigger test suites, longer staging cycles, no deploys on Fridays.

The problem is that this approach treats speed and stability as a trade-off, and they’re not. What the DORA research actually shows is that elite teams deploy more frequently and have lower failure rates. They’re not choosing one over the other. They’ve just changed how they deploy.

The key insight is this: deployment is a technical event. Release is a business decision. When you treat them as the same thing, every deployment is a high-stakes moment. When you separate them, using feature flags to keep new code hidden from users until you’re ready, the risk profile of each deployment drops dramatically.

If the no-deploy Fridays rule is something your team leans on, last month I wrote a practical framework for breaking that habit that goes into the mechanics in more detail.

How feature flags move all four metrics

Deployment Frequency and Lead Time

When new code is wrapped in a feature flag, developers can merge and deploy continuously without waiting for a release window or holding a PR until the whole feature is done. The code sits safely in production, invisible to users, until the team is ready. This removes the artificial batching that inflates Lead Time and suppresses Deployment Frequency. You stop shipping once a week because you were scared to, and start shipping many times a day because there’s no reason not to.

For the eight-engineer team from above, this alone can cut Lead Time from a week to a day. Not because the engineers are writing code faster, but because the code stops sitting in a queue waiting for a release window.

Change Failure Rate

Canary releases, where you roll a feature out to 5% of users before widening to everyone, let you catch failures at a scale where the impact is contained. If the canary starts showing errors, 95% of your users are completely unaffected. You fix the issue, refine the flag settings, and try again. Over time this has a compounding effect on your Change Failure Rate because you’re never betting everything on a single deployment.

Time to Restore Service

This is the one where feature flags have the most dramatic effect. Without them, restoring service after a bad release means identifying the bad commit, coordinating a rollback or hotfix, and deploying again, often under pressure with half the team asleep. With a kill switch in your dashboard, Time to Restore drops to milliseconds. You toggle the flag off, users are routed back to the working behaviour, and your team can investigate calmly rather than frantically.

That eight-engineer team with the day-long Friday incidents? With a kill switch that becomes a five-minute job.

Measuring them is the starting point, not the end

One thing worth saying: DORA metrics are a compass, not a target. Teams that start optimising directly for the numbers, artificially inflating deployment frequency with trivial changes for instance, miss the point entirely. The metrics are useful because they reflect genuine engineering health. Improving them by actually changing how you ship is what matters.

If your Change Failure Rate is high, the answer isn’t to deploy less often. It’s to make each deployment safer. If your Lead Time is long, the answer isn’t to cut corners on review. It’s to reduce batch sizes and remove the things artificially holding code back from production.

Feature flags address both of those root causes directly, which is why teams that adopt them tend to see movement across all four metrics, not just one. That’s the point worth taking away from the DORA research: the practices that improve speed are the same ones that improve stability. They reinforce each other.

I built RocketFlag because improving these numbers shouldn’t require an enterprise contract or a dedicated platform engineering team. Two lines of code, a free plan, and a fixed price that doesn’t go up when your deployment frequency does.

The metrics should go up. Your bill shouldn’t.

– JK @ RocketFlag